|

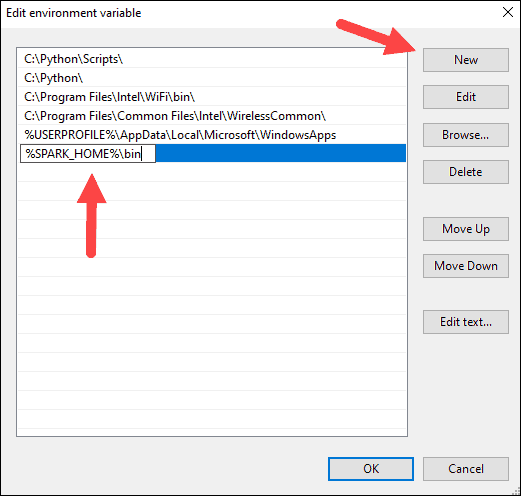

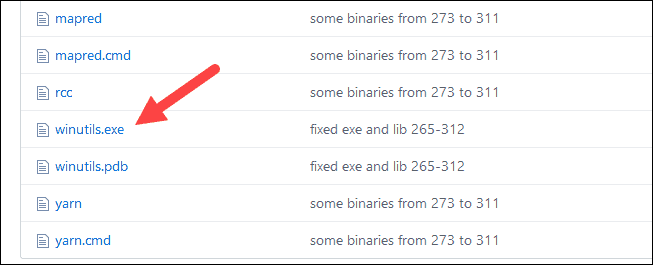

These instructions can be applied to Ubuntu, Debian, Red Hat, OpenSUSE, MacOS, etc. Dieser Download kann aus rechtlichen Gründen nur mit Rechnungsadresse in A, B, BG, CY, CZ, D, DK, EW, E, FIN, F, GR, HR, H, IRL, I, LT, L, LR, M, NL, PL, P, R. It comibnes a stack of libraries including SQL and DataFrames, MLlib, GraphX, and Spark Streaming. This article provides step by step guide to install the latest version of Apache Spark 3.0.0 on a UNIX alike system (Linux) or Windows Subsystem for Linux (WSL). In this post, we will install apache spark on the hadoop cluster we created in the previous X machine learning cookbook (pdf) torrent for free, hd full. Apache Spark is a cluster comuting framework for large-scale data processing, which aims to run programs in parallel across many nodes in a cluster of computers or virtual machines. If you manually list out the columns instead of using a * that should act as a workaround. That’s all, thank you for reading this post and hope this simple guide will help you to install Apache Spark on your own Windows machine. Apache Spark 3.0.0 Installation on Linux Guide. withColumn("Data", struct("A", "B", "C"))ĭata.select($"*", max("num").over(winSpec) as "max").explain(true)īy turning off eager analysis (so that we can call explain without it throwing an error) you can see that the "*" is getting expanded to include columns that aren't actually available: = Parsed Logical Plan = Sql("SET =false") // Let us see the error even though we are constructing an invalid tree .install : install an Apache service -k config : change startup Options of an Apache service -k uninstall : uninstall. Apache HTTP Server versions later than 2.2 will not run on any operating system earlier than Windows 2000. Always obtain and install the current service pack to avoid operating system bugs. It looks to me like you are hitting a bug when the analyzer is trying to expand the * import sqlContext.implicits._ The primary Windows platform for running Apache 2.4 is Windows 2000 or later. If instead those columns aren't nested, it works fine.Īm I missing something with the syntax, or is this a bug? AnalysisException: resolved attribute(s) A#39,B#40 missing from num#33,Data#37 in operator !Project Īt .$class.failAnalysis(CheckAnalysis.scala:38)Īt .(Analyzer.scala:44) Follow the steps given below for installing Spark.

After downloading it, you will find the Spark tar file in the download folder. For this tutorial, we are using spark-1.3.1-bin-hadoop2.6 version. Val winSpec = Window.partitionBy("Data.A", "Data.B").orderBy($"num".desc)ĭata.select($"*", max("num").over(winSpec) as "max").where("num = max").drop("max").show Download the latest version of Spark by visiting the following link Download Spark. These instructions can be applied to Ubuntu, Debian, Red Hat, OpenSUSE, etc. This article provides step by step guide to install the latest version of Apache Spark 3.0.1 on a UNIX alike system (Linux) or Windows Subsystem for Linux (WSL). I've created a small example below demonstrating the problem. Step 1: Install Java 8 Apache Spark requires. If you already have Java 8 and Python 3 installed, you can skip the first two steps.

I'm trying to use a Window function partitioned on a nested column. Installing Apache Spark on Windows 10 may seem complicated to novice users, but this simple tutorial will have you up and running. Spark app for Windows 10 Download Spark for Windows 10/8/7 64-bit/32-bit. I searched around and didn't see this mentioned elsewhere so I'm asking here before filing a bug report. I'm not sure this is a bug (or just incorrect syntax).

0 Comments

Leave a Reply. |

Details

AuthorToby ArchivesCategories |

- Blog

- Flexisign pro 10-5 crack

- Humsafar drama on youtube

- How to burn music to cd from bandcamp

- Acer b6 series b286hk black friday

- How to update mac mail 10-3

- What is intel management engine firmware for

- Big screen and player for mp4 video

- Kimi no na wa download hd

- Google earth pro free download for windows 10

RSS Feed

RSS Feed